Archive

SQL Server Denali – Paging

The introduction of paging within SQL Server Denali will have made a significant number of developers happy, all of which will of previously created home-baked solutions to the same problem. All the solutions have the same underlying problem – paging is by its nature is inefficient. Most solutions use the row number analytic function, and then sub-select data from that. For a large dataset that presents a problem – the data has to be fully scanned, sorted and allocated row numbers. A suitable index can eliminate the sort operator, but you still end up scanning the entire index to allocate the row numbers.

In Denali, we can see that they have added support to the Order By clause, to include a starting offsets and syntax to denote how many rows we wish to return. The syntax can be examined on MSDN (http://msdn.microsoft.com/en-us/library/ms188385%28v=SQL.110%29.aspx#Offset) and in brief is:

ORDER BY order_by_expression

[ COLLATE collation_name ]

[ ASC | DESC ]

[ ,...n ]

[ <offset_fetch> ]

<offset_fetch> ::=

{

OFFSET { integer_constant | offset_row_count_expression } { ROW | ROWS }

[

FETCH { FIRST | NEXT } {integer_constant | fetch_row_count_expression }

{ ROW | ROWS } ONLY

]

}

Seeing this new syntax, made me want to try it out and see how the query plans are affected. I am using the trusty Adventure Works as usual – a version for Denali has been put on codeplex, so one quick download later and I was ready to test the new syntax. (Adventure Works download : http://msftdbprodsamples.codeplex.com/releases/view/55330 )

For my tests, I used the production.product table, and wished to page the products based on their name. There is a non-clustered index on the Name field of the product table as well as a clustered index on the product_id, so what would the query plan give?

select * from Production.Product order by name asc offset 10 rows fetch first 10 rows only

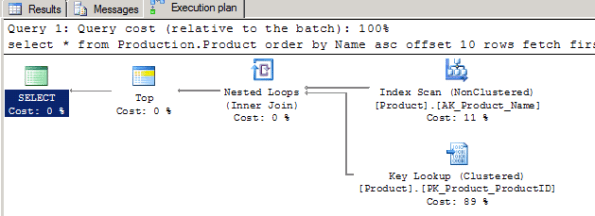

And the query plan is not very surprising

So even with a new syntax the underlying problem remains, the nature of paging is that you are scanning the data, with statistics io turned on the stats come back with Table ‘Product’. Scan count 1, logical reads 15 etc. not particularly exciting and what we would expect given the table is contained within 15 pages. It was because of the stats though that I noticed an anomaly, in one of the tests, I had dropped to returning only a single row from the table as follows:

select * from Production.Product order by name asc offset 10 rows fetch first 1 rows only

What I noticed was that the statistics changed to Table ‘Product’. Scan count 1, logical reads 24 – the entire table is contained within 15 pages, so how could it jump to reading 24?

A quick check of the query plan showed what has changed, the engine decided that it was cheaper to use the Name index, which for the purposes of the ordering was narrower and therefore more efficient, and then join back to the main table via the clustered key. Understandable, although the additional pages read is unlikely to make this more efficient, but I doubt you would see much real world difference. An oddity, but nothing really significant in it.

This triggered a more interesting thought, what happens if we reduce our fields so that the index is considered a covering index? is SQL going to get smart when making a selection – so far we have only seen full table scans occurring.

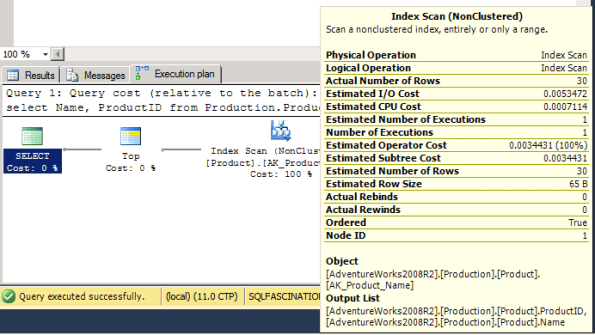

select Name, ProductID from Production.Product order by name asc offset 20 rows fetch first 10 rows only

The query is now being covered by the name index since the non-clustered index includes the clustered key (ProductID) – and this changes the query plan again, although its pretty subtle change to notice.

The expected index scan appears, but if you look closely at the tooltip for the scan, the number of rows being read in the scan is not the total number of rows in the index, but a product of the offset + the number of rows requested. This was also reflected within the statistics, showing only 2 logical reads – the index uses 6 pages in total. As I changed the number of rows to offset / return the Actual number of rows read changed accordingly. o with a covering index in place, the query engine gets a bit more efficient and does a forward scan of the index until the point at which we have passed a sufficient number of rows. This sounds good – we have avoided scanning the whole index to provide the paged results in a slightly more efficient manner.

Except those with a quick mind will realise that the performance degrades as you go further and further down the list, requesting the 490-500th products will results in 500 rows being checked, not 30. By putting in a covering index we have sacrificed consistency on query times to gain some potential performance – the full scans solutions will broadly speaking take the same time regardless of which 10 rows you might be requesting, since it has to scan, sort, allocate numbers and then sub-select.

As features go, I like the paging – it removes the need for all the different homegrown solutions that are out there, but the performance of it remains a problem – this is no silver bullet to paging performance problems that people have.

Immutable Primary Key vs Immutable Clustered Key

It is often said that a primary key should be immutable, and this advice is echoed on a multitude of sites some of which strengthen it to a ‘law’ – but we know with databases that absolutes are rare and it is very difficult to be 100% prescriptive. There is then no mention of the clustering key being immutable alongside it, which strikes me as strange since it is just as important.

What happens within SQL Server to the row if you change the clustered key?

- If you change the clustered key value the row must be physically moved and can result in page splits / fragmentation.

- A change of the clustered key requires all the non-clustered indexes to be updated to reflect the clustering key choice.

And if you change the primary key?

- A change of the primary key, has to be reflected in each of the other tables that use the key as a linking mechanism.

- The primary key must still uniquely identify the row within the table.

Clearly different issues, but why does the primary key immutability get so much attention and not the clustering key? The default behaviour of SQL Server is that the primary key becomes the clustering key, so in essence all 4 points get applied, but you can choose a different primary key to the clustering key.

What sort of expense are we talking about if we allow the clustering key to not be immutable and started altering a row’s clustered key value? To get a better understanding of what is going on under the hood, I’ll construct an example and check the transaction log.

Creating a simple table in SQL and inserting a few rows to set up the test is pretty easy:

CREATE TABLE [dbo].[MyTable]( [MyID] [int] IDENTITY(1,1) NOT NULL, [FirstName] [varchar](20) COLLATE SQL_Latin1_General_CP1_CI_AS NOT NULL, [SecondName] [varchar](30) COLLATE SQL_Latin1_General_CP1_CI_AS NOT NULL, CONSTRAINT [PK_Table_1] PRIMARY KEY CLUSTERED ( [FirstName] ASC )WITH (IGNORE_DUP_KEY = OFF) ON [PRIMARY] ) ON [PRIMARY]

Insert a few rows and then issue a simple update statement.

update mytable set firstname = 'TestFirstName', Secondname = 'TestSecondName' where MyID = 2

Inspect the transaction log and it is noticable that the log does not contain a LOP_MODIFY_ROW or LOW_MODIFY_COLUMNS within it, but contains a LOP_DELETE_ROWS and a LOP_INSERT_ROWS. Instead of just modifying the data, SQL has removed the row and reinserted it. A few other items appear with the transaction, LOP_BEGIN_XACT and LOP_COMMIT_XACT as you would expect to start and commit the transaction. There is also a LOP_SET_BITS on the LCX_PFS which is not surprising to see either, since we have potentially affected the free space level of the page the data was inserted into.

That maps to exactly what we expect from a high level logical perspective – the row has to be moved and there is no LOP_MOVE_ROW operation. This results in the row being placed into the transaction log twice, as a before and after.

What happens if we perform an update that does not include the clustered key?

update mytable set Secondname = 'AnotherTestSecondName' where MyID = 3

This time the log only includes 2 entries, the LOP_BEGIN_XACT / LOP_COMMIT_XACT and a single LOP_MODIFY_ROW which is more as you would expect.

Size wise, the transaction log entry length for the first alteration was 96 + 148 + 56 + 160 + 52 = 512 bytes. For the second entry it was only 96 + 144 + 52 = 292. So the alteration used more log space and due to write ahead logging it must be committed to the disk, but the actual difference for a single row does not look too significant.

Well, whilst it does not look significant, you have to remember that the row being modified was very small. As previous examples have shown the LOP_DELETE_ROWS and LOP_INSERT_ROWS include the entire contents of the row being removed / added, so with a larger row the entire contents of the row would be added to the log twice, compared to the simple modification. That would start to get expensive.

So altering the clustering key is clearly expensive for the transaction log in comparison to a normal update, and this example did not have additional non-clustered indexes added to the table, which would also then require even more entries to deal with the removal and re-insertion of the non-clustered index values.

Given a choice I would make both immutable; the primary key shouldn’t be the only one to get special treatment and be designed to be immutable, make the clustering key immutable as well.

Locating Table Scans Within the Query Cache

Some time back, Pinal Dave published a blog entry with an example of an XML script that used XQuery to examine the query cache – the script in itself is a very useful example of using XQuery against the query plans, but doesn’t quite hit the mark in terms of being an invaluable performance tuning script since it provides an information overload and doesn’t help locate those annoying query problems.

Using it as inspiration however, you might find this useful when tracking down dodgy queries. A number of key items have been added:

- The database the scan has occurred in.

- The schema the scan has occured in.

- The table name the scan has been performed on.

Understandably, very useful fields to additionally expose, since these allow filtering of the results to exclude tables that are of no interest due to their size (small dimension / lookup tables for example.)

WITH XMLNAMESPACES(DEFAULT N'http://schemas.microsoft.com/sqlserver/2004/07/showplan'), CachedPlans (DatabaseName,SchemaName,ObjectName,PhysicalOperator, LogicalOperator, QueryText,QueryPlan, CacheObjectType, ObjectType) AS ( SELECT Coalesce(RelOp.op.value(N'TableScan[1]/Object[1]/@Database', N'varchar(50)') , RelOp.op.value(N'OutputList[1]/ColumnReference[1]/@Database', N'varchar(50)') , RelOp.op.value(N'IndexScan[1]/Object[1]/@Database', N'varchar(50)') , 'Unknown' ) as DatabaseName, Coalesce( RelOp.op.value(N'TableScan[1]/Object[1]/@Schema', N'varchar(50)') , RelOp.op.value(N'OutputList[1]/ColumnReference[1]/@Schema', N'varchar(50)') , RelOp.op.value(N'IndexScan[1]/Object[1]/@Schema', N'varchar(50)') , 'Unknown' ) as SchemaName, Coalesce( RelOp.op.value(N'TableScan[1]/Object[1]/@Table', N'varchar(50)') , RelOp.op.value(N'OutputList[1]/ColumnReference[1]/@Table', N'varchar(50)') , RelOp.op.value(N'IndexScan[1]/Object[1]/@Table', N'varchar(50)') , 'Unknown' ) as ObjectName, RelOp.op.value(N'@PhysicalOp', N'varchar(50)') as PhysicalOperator, RelOp.op.value(N'@LogicalOp', N'varchar(50)') as LogicalOperator, st.text as QueryText, qp.query_plan as QueryPlan, cp.cacheobjtype as CacheObjectType, cp.objtype as ObjectType FROM sys.dm_exec_cached_plans cp CROSS APPLY sys.dm_exec_sql_text(cp.plan_handle) st CROSS APPLY sys.dm_exec_query_plan(cp.plan_handle) qp CROSS APPLY qp.query_plan.nodes(N'//RelOp') RelOp (op) ) SELECT DatabaseName,SchemaName,ObjectName,PhysicalOperator , LogicalOperator, QueryText,CacheObjectType, ObjectType, queryplan FROM CachedPlans WHERE CacheObjectType = N'Compiled Plan' and ( PhysicalOperator = 'Clustered Index Scan' or PhysicalOperator = 'Table Scan' or PhysicalOperator = 'Index Scan')

The final alteration is the limitation of the results to only those query plans that include scans, although you could use this to target hash matches or other potentially expensive operations that indicate there is a query plan / indexing opportunity to investigate.

Using this script makes it far easier to run through the query cache and can easily be further modified to include a link to the sys.dm_exec_query_stats via the plan_handle so you could also pull the execution count for the queries with the scans to further prioritize performance tuning work.

Why is SQL Azure and Index Fragmentation a Bad Combination?

I’ve been thinking through and experimenting a bit more with some of the concepts in SQL Azure – specifically I was considering the impact of fragmentation on both the storage (in terms of the storage limit) as well as the maintenance. This is not a new issue, DBA’s face fragmentation regularly and can deal with it in a variety of ways, but with SQL Azure the problem looks magnified by a lack of tools and working space. Whilst looking into this, I then realised that there is an unfortunate consequence of not knowing how much data space your index is actually using.

Each table in SQL Azure has to have a clustered index if data is going to be inserted into it and clustered indexes can suffer from fragmentation if chosen poorly. The combination of SQL Azure and the time-honoured fragmentation provides three consequences about it, fragmentation:

- will occur and you have no way in which to measure it due to the lack of DMV support.

- will create wasted space within your space allocation limit.

- will reduce your performance.

You could work it out if you knew how much space you had actually used vs. what the size of the data held is, but we are unable to measure either of those values. If you have chosen the data compression option on the index then even those values would not give you a fragmentation ratio.

This leaves us with a situation in which you can not know how much you are fragmented, meaning:

- You schedule a regular index rebuild.

- Hope SQL Azure performs index rebuilds for you.

I’m not aware of SQL Azure doing this for you – and you do not have SQL Agent facilities either.

So this seems very wrong, the concept of SQL Azure is to take away a lot of the implementation details and hassle from the subscriber – DR and failover is handled etc. But there looks to be as gap in which certain items such as fragmentation is falling – I have not seen any documentation saying SQL Azure handles it (but there could be some hidden somewhere and I hope there is!) and neither are you given the right tools in which to program and handle it yourself.

What happens when you hit that size limit?

Msg 40544, Level 20, State 5, Line 1 The database has reached its size quota. Partition or delete data, drop indexes, or consult the documentation for possible resolutions. Code: 524289

That took a lot of time to get to, (SQL Azure is not fast), but was generated using a simple example that would also demonstrate fragmentation.

Create Table fragtest ( id uniqueidentifier primary key clustered, padding char(3000) )

Very simple stuff, deliberately using a clustered key on a GUID to cause a decent level of fragmentation, and also using the padding fixed with character field to ensure 2 rows per page only, maximising the page splits.

insert into fragtest values (newid(), replicate('a',1000)) go 200000

Because of the randomness of the newid() function, the level of fragmentation is not predictable but will certainly occur – in my test I hit the wall on 196,403 records and failed with an out of space message.

Given the 2 rows per page and the number of rows, with ~0% fragmentation the data should be able ~767Mb – that is considerably short of 1 Gb – so there is a significant level of fragmentation in there wasting space, about 23% of it. If you include the 2k per page being wasted by the awkward row size then the actual raw data stored is roughly ~60% of the overall size allowing for row overheads etc.

So there are two important points from this contrived example:

- You can lose significant space from bad design.

- Doing this backs you into a corner that you will not be able to get out of – this is the worst part.

How are you cornered? well, try work out how to get out of the situation and defrag the clustered index / free up the space, you could:

- Attempt an index rebuild.

- Try to rebuild it with SORT_IN_TEMP.

- Drop the index.

- Delete data.

The first three fail, the SORT_IN_TEMP is not supported and would not of rescued the situation either since you have no working space in which to write the newly sorted rows prior to removing the old ones. So do you really want to delete data? I don’t think we can consider that an option for now.

This all seems like a ‘rock’ and a ‘hard place’; whilst SQL Azure can support these data quantities, it seems prudent that you never consider actually going close to them at all – and that you equally are going to find it difficult to understand if you are close to them, since there is no way of measuring the fragmentation. The alternative is that you manually rebuild indexes on a regular basis to control fragmentation, but then enough free space is going to have to be left to allow you to rebuild your largest index without running out of space – reducing your data capacity significantly.

The corner is not entirely closed off, the way out of the corner would be to create another SQL Azure database within my account and select the data from database1.fragtest to database2.fragtest and then drop the original table and transfer it back – not ideal but it would work in an emergency.

I think the key is to design to make sure you do not have to face this issue; keep your data quantities very much under the SQL Azure size limits, and watch for the potential of tables being larger than the remaining space and preventing an re-indexing from occurring.

Interested to know your thoughts on this one, and what other consequences of being close to the limit will come out.

Inconsistent Date Literal Parameterization Behaviour

I have mentioned query parameterization before and the process by which SQL extracts literal values from a query and re-writes the query in effect to use parameters to get a query plan cache hit, which negates the need to recompile the plan costing both time and CPU. There are a lot of good articles and book chapters that cover the topic.

What has confused me for a while is witnessing date literals within a query being parameterized on one query and not on another, even though both databases have parameterization set to ‘Simple’ mode. When a date literal is not parameterized the chances of getting a query plan cache hit is obviously very low which has performance impacts. The problem to date has been that I had been unable to ascertain the commonality and requirements that allowed the query to get parameterized and when it just kept the literal. I was paying too much attention to the query and as it turns out not enough to the table.

Well, after a number of hours getting to know the brick wall very well I finally tracked it down, and oddly it had nothing to do with the query I was submitting, but I could reproduce reliably by using an unrelated non-clustered index, which is confusing to say the least and I can not yet think of any reason why, is it a bug or just ‘Weird and Odd’.

The following steps reproduce the issue in both SQL Server 2005 and 2008.

Create a database, default settings, nothing specific, then the second step is to create our test table, a simple structure is suffice.

CREATE TABLE [dbo].[paramtest]( [id] [int] IDENTITY(1,1) NOT NULL, [somedate] [datetime] NOT NULL, [somefield] [varchar](50) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, CONSTRAINT [PK_paramtest] PRIMARY KEY CLUSTERED ( [id] ASC )WITH (IGNORE_DUP_KEY = OFF) ON [PRIMARY] ) ON [PRIMARY]

We need some data to work against just to make sure we are selecting results, and we can insert these trivially as follows:

insert into [paramtest] values (getdate(),'a') go 10000

So we now have 10k rows within the table, and a clustered primary index on the identity column.

The test starts with freeing up the procedure cache, and then running the select statement, the datetime I used was roughly in the middle of the range of values I had inserted but is not a deciding factor in the query plan results.

dbcc freeproccache select * from paramtest where somedate > '2009-11-12 21:14:50.000'

Using a standard query plan cache extraction query the specific line of the xml plan we are interested in is the SQL Statement.

<StmtSimple StatementText="(@1 varchar(8000))SELECT * FROM [paramtest] WHERE [somedate]>@1" StatementId="1" StatementCompId="1" StatementType="SELECT" StatementSubTreeCost="0.0379857" StatementEstRows="3831.48" StatementOptmLevel="TRIVIAL">

From it you can see the literal varchar value was extracted as @1 with a type of varchar(8000) and the query altered to use this parameter – this is exactly the behaviour we would expect from parameter sniffing.

Next step is to create a non-clustered index on the varchar ‘somefield’ – completely unrelated to the date literal being used, and should have no impact on the query at all.

CREATE NONCLUSTERED INDEX [ix_test] ON [dbo].[paramtest] ([somefield] ASC )WITH (SORT_IN_TEMPDB = OFF, DROP_EXISTING = OFF, IGNORE_DUP_KEY = OFF, ONLINE = OFF) ON [PRIMARY]

Free the procedure cache up again and rerun the query

dbcc freeproccache select * from paramtest where somedate > '2009-11-12 21:14:50.000'

Extract the query plan again from the cache, but this time it is noticable different, the parameterisation has not occurred. The literal has

<StmtSimple StatementText="select * from paramtest where somedate > '2009-11-12 21:14:50.000'" StatementId="1" StatementCompId="1" StatementType="SELECT" />

To revert to the old plan, drop the index and clear the cache again, then run the query once more.

DROP INDEX [ix_test] ON [dbo].[paramtest] WITH (ONLINE = OFF)

Then clear the cache again and run the query

select * from paramtest where somedate > '2009-11-12 21:14:50.000'

And we are back to being parameterized.

So the application of a single non-clustered index on a separate field to the one being queried is preventing the simple parameterization mode from parameter sniffing the date literal – this makes absolutely no sense, and you can play around with it a lot more knowing what it causing the effect on the query plan. Even placing the additional non-clustered index on the identity field, which already has a clustered index results in the parameterization failing. If this behaviour is be design, then it makes for an interesting design or limitation on the parameterization.

As soon as the database is in ‘Forced’ parameterization mode, the literal was converted each time, so this looks specific to simple mode, but is not explainable, just demonstratable.

Can a Covering NC Index be Tipped?

Non-clustered indexes normally have a ‘tipping point’, which is the point at which the query engine decides to change strategies from seeking the index with a nested loop operator back to a seek on the underlying table or choosing to just scan the underlying table and ignore the index. Kimberley Tripp wrote a great article about ‘The Tipping Point‘ , and the guidance is at about the 25-33% the query engine will change strategies.

If the non-clustered index is a covering index (it contains all the fields within the query) the query engine does not take the same decision – it makes sense that if any change in strategy occurs, it would have to be at a far higher figure, and as we are about to see, it will not take that decision and tip.

To test what strategy the engine would use I created a test situation of 2 separate tables, with different page counts, due to the padding column forcing the second table to use far more pages (5953 pages vs 9233)

CREATE TABLE [dbo].[tblIxTest1]( [PersonnelID] [int] IDENTITY(1,1) NOT NULL, [FirstName] [char](30) NULL, [LastName] [char](30) NULL, [Department] [char](30) NULL, [SomePadding] [char](10) NULL ) ON [PRIMARY]

And,

CREATE TABLE [dbo].[tblIxTest2]( [PersonnelID] [int] IDENTITY(1,1) NOT NULL, [FirstName] [char](30) NULL, [LastName] [char](30) NULL, [Department] [char](30) NULL, [SomePadding] [char](1000) NULL ) ON [PRIMARY]

Next step was to insert some data, I needed random data to be able to ensure the index was not unbalanced in some way, so I broke out my useful little random string generation function. I should mention how to create this, a SQL function will not directly support the inclusion of a Rand() call within them, any attempt to do this results in the error:

Msg 443, Level 16, State 1, Procedure test, Line 13

Invalid use of a side-effecting operator 'rand' within a function.

However, there is nothing stopping a view from using this, and the function from using the view to get around the limitation:

Create View [dbo].[RandomHelper] as Select Rand() as r

And then the function can be generated to use this, it is not necessarily the most efficient random string generation function, but it works nicely.

CREATE FUNCTION [dbo].[RandomString] (@Length int) RETURNS varchar(100) WITH EXECUTE AS CALLER AS BEGIN DECLARE @Result Varchar(100) SET @Result = '' DECLARE @Counter int SET @Counter = 0 WHILE @Counter <= @Length BEGIN SET @Result = @Result + Char(Ceiling((select R from randomhelper) * 26) + 64) SET @Counter = @Counter + 1 END RETURN(@Result) END

This now allows me to generate random data and insert it into the tables to get a nice data distribution, and this was run for both of the tables.

insert into tblIxTest1 values (dbo.RandomString(20),dbo.RandomString(20),dbo.RandomString(20),'') go 1000000

Two NC indexes are now needed, one for each table and both are identical and cover just the FirstName and PersonnelID fields within the table.

CREATE NONCLUSTERED INDEX [IX_Test1] ON [dbo].[tblIxTest1] ( [FirstName] ASC, [PersonnelID] ASC )WITH (STATISTICS_NORECOMPUTE = OFF, SORT_IN_TEMPDB = OFF, IGNORE_DUP_KEY = OFF, DROP_EXISTING = OFF, ONLINE = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY] GO CREATE NONCLUSTERED INDEX [IX_Test2] ON [dbo].[tblIxTest2] ( [FirstName] ASC, [PersonnelID] ASC )WITH (STATISTICS_NORECOMPUTE = OFF, SORT_IN_TEMPDB = OFF, IGNORE_DUP_KEY = OFF, DROP_EXISTING = OFF, ONLINE = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY] GO

The setup is complete and it is pretty easy to now show the NC covering index is not going to tip, the most extreme where clause is where I am allowing every record to be returned:

select personnelid , firstname from tblixtest1 where firstname >= 'a' and firstname <= 'zzzzzzzzzzzzzzzzzzzzz'

This still produces a query plan with a seek strategy, regardless of which of my two tables it was executed on:

select personnelid , firstname from tblixtest1 where firstname >= 'a' and firstname <= 'zzzzzzzzzzzzzzzzzzzzz'

|--Index Seek(OBJECT:([FilteredIndexTest].[dbo].[tblIxTest1].[IX_Test1]), SEEK:([FilteredIndexTest].[dbo].[tblIxTest1].[FirstName] >= [@1] AND [FilteredIndexTest].[dbo].[tblIxTest1].[FirstName] <= [@2]) ORDERED FORWARD)

If we just select the entire table, unsurprisingly at that point it chooses to perform an index scan.

select personnelid , firstname from tblixtest1

Results in the following plan:

select personnelid , firstname from tblixtest1 |--Index Scan(OBJECT:([FilteredIndexTest].[dbo].[tblIxTest1].[IX_Test1]))

The row counts on both queries were identical at 1 million. Slightly more interesting is that if I use a Like clause instead of a direct string evaluation, the behaviour alters slightly when selecting all the values:

select personnelid , firstname from tblixtest1 where firstname like '[a-z]%'

Gives the query plan:

select personnelid , firstname from tblixtest1 where firstname like '[a-z]%' |--Index Scan(OBJECT:([FilteredIndexTest].[dbo].[tblIxTest1].[IX_Test1]), WHERE:([FilteredIndexTest].[dbo].[tblIxTest1].[FirstName] like '[a-z]%'))

So the query engine is potentially making an optimisation that it knows the like clause covers 100% and adopts an automatic scan, but it is not really very clear why it has this optimisation path. If the like clause changes to [a-y] then it reverts back to a seek, so it looks specific to covering all the values within the like statement. If a between statement is used, it remains a seek regardless.

So the result is that a Non-clustered covering index is very unlikely to tip, you either have to not give it a where clause, or use a like statement across all the values available, it will steadfastly refuse to seek and choose the scan.

Why?

Well the I/O cost of the operation remains the same, it has to read every page in the table and it considered the cost of traversing the B-Tree negligible, so the difference between seek and scan is not very great. Running the seek based query and scan based query in the same batch the relative percentages are 48% vs 52% – that is the scan scoring 52% even though they read the same number of rows.

Outputting the IO statistics when they are run side by side shows the same number of pages being read, but the seek is clearly being favoured and is slightly faster as far as SQL is concerned – it is quite weird to consider a seek of an entire index is more efficient than a scan of the index.

(1000000 row(s) affected) Table 'tblIxTest1'. Scan count 1, logical reads 5995, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0. (1000000 row(s) affected) Table 'tblIxTest1'. Scan count 1, logical reads 5995, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

So if you come across a covering index in a query plan that is scanning, it would be worth investigating as to whether it is intended. The chances are more likely the index field order is not supporting the predicates being used, than engine has chosen to tip the index like it would for the non-covering non-clustered indexes.

Do Filtered Index Have Different Tipping Points?

I’ve been taking a look at filtered indexes and they do initially look particularly useful, but I was wandering what effect the filtered index has on the tipping point. The tipping point was described by Kimberley L. Tripp in an excellent post – and describes the point at which SQL opts to change the query plan strategy from a NC index seek to a scan of some kind (Table / Clustered Index)

So I was wondering whether Filtered indexes chose a different strategy, or would they ‘tip’ from a targeted index to a table scan in the same way.

First off was to create a dummy table:

create tabletblPersonnel ( PersonnelID Int Identity, FirstName Char(30), LastName Char(30), Department Char(30), SomePadding Char(250) )

This was deliberately created as a heap, since data had to be generated and loaded:

insert into tblPersonnel(firstname,lastname,Department) values( dbo.randomstring(20), dbo.randomstring(20), dbo.randomstring(20)); go 1000000

The dbo.randomstring is a user created function I added to generate a random string [A-Z] the length of the function parameter, so the insert statement is inserting random strings of length 20 into the first name, last name and department fields.

Once the data was loaded, a clustered index was applied.

alter table dbo.tblPersonnel add constraint PK_tblPersonnel primary key clustered(PersonnelID) with( statistics_norecompute = off, ignore_dup_key = off, allow_row_locks = on, allow_page_locks = on) on [PRIMARY] GO

A quick check of the table:

select COUNT(*) from tblpersonnel ----------- 1000000 (1 row(s) affected)

To get a rough idea of the tipping point we need to know the number of pages at the leaf level of the table.

select * from sys.objects where name ='tblpersonnel' -- grab the object id and query the physical stats. select * from sys.dm_db_index_physical_stats(DB_ID('FilteredIndexTest'), 1117247035 , 1,0,'DETAILED') -- 45455 pages shown in the table at leaf level 0 i.e. the clustered index

So create an NC index on the first name, last name.

create nonclustered index[IX_Normal] ON [dbo].[tblPersonnel] ( [FirstName] asc, [LastName] asc ) with( pad_index = off ,statistics_norecompute = off, ignore_dup_key = off, allow_row_locks = on, allow_page_locks = on) on [PRIMARY] GO

For reference, we will also check how many pages the NC index has:

select * from sys.dm_db_index_physical_stats(DB_ID('FilteredIndexTest'), 1117247035 , 2,0,'DETAILED') -- Level 0 of the index shows 8696 pages

The tipping point is expected between 25% to 33% of the number of pages when expressed as rows, so something between 11363 to 15000 rows is where to expect it. Since the data was random when inserted, a bit of human binary chop is needed to find the tipping point. After some experimentation – the dividing point was between the firstname like ‘a[a-h]%’ and ‘a[a-i]%’

select * from tblpersonnel where firstname like 'a[a-h]%' -- 11898 rows - seeks select * from tblpersonnel where firstname like 'a[a-i]%' -- 13299 rows - scans

So the tipping point showed up within the expected range, so next I created the filtered index based on FirstName ‘>=a’ and ‘<= ak’ since I can not use a like clause in the Filtered Index where statement.

create nonclustered index [IX_Filter] ON[dbo].[tblPersonnel] ( [FirstName] asc, [LastName] asc ) where[FirstName] >= 'a'and firstname <= 'ak' ) with( pad_index = off ,statistics_norecompute = off, ignore_dup_key = off, allow_row_locks = on, allow_page_locks = on) on [PRIMARY] GO

The filtered index is in play, so I disabled the non-filtered index and re-ran the same queries.

select * from tblpersonnel where firstname like 'a[a-h]%' -- 11898 rows - seeks select * from tblpersonnel where firstname like 'a[a-i]%' -- 13299 rows - scans

Conclusion: The tipping point did not alter based on the filtered index – it still tips based on the ratio of rows to total pages in the leaf level of the clustered index.